Linear algebra is a fundamental part of modern mathematics. It supports fields from calculus and statistics to geometry, control theory, and functional analysis. Most linear systems are well understood. Even nonlinear problems are often studied through linear approximations.

Numerical linear algebra extends these ideas to computation, enabling solutions of PDEs, optimization tasks, eigenvalue problems, and more. It addressing some of the hardest problems in physics and engineering.

Motivations from Physics¶

Normal Modes: Vibrations near equilibrium reduce to generalized eigenvalue problems. Linear algebra therefore reveals resonance in materials, acoustics, and plasma waves.

Quantum Mechanics: Described by the Schrödinger equation, quantum systems are inherently linear.

Discretized PDEs: Discretizing PDEs yields large sparse linear systems. They can solved numerically by methods such as conjugate gradient.

Nonlinear Problems: Nonlinear physics problems including turbulence are sometimes untrackable. Linearizing them with perturbation theory reduces them to sequences of linear systems.

Motivations from Computation¶

Large-Scale Data: Modern sensors and simulations produce massive datasets. Matrix decompositions (e.g., SVD, PCA) provide compression, noise reduction, and feature extraction.

Neural Networks: Core operations in training, i.e., backpropagation, is dominated by large matrix multiplications. Efficient linear algebra routines are therefore critical for scaling deep learning.

Hardware Accelerators: GPUs and TPUs are optimized for matrix operations, making vectorized linear algebra essential for both neural networks and scientific computing.

Condition Number and Accuracy in Solving Linear Equations¶

When solving a linear system , accuracy depends not only on the algorithm but also on the conditioning of the matrix. The condition number describes how sensitive the solution is to small perturbations in the input.

Definition (2-norm)¶

If , the system is well-conditioned.

If , the system is ill-conditioned, and small changes in or can produce large errors in .

Error Amplification¶

If is perturbed by , the solution changes by . For small perturbations,

Thus the relative error in can be amplified by a factor of up to .

Interpretation¶

--102: problem is well-behaved; only a few digits may be lost.

: up to 6 digits of accuracy may be lost (in double precision with digits).

: essentially singular in double precision; solutions may be dominated by numerical noise.

In practice, estimates the number of accurate digits lost in the solution.

Direct Solvers¶

Direct methods are often the first approach taught for solving linear systems . They involve algebraic factorizations that can be computed in a fixed number of steps (roughly ) for an matrix.

Gaussian Elimination¶

Gaussian Elimination transforms the system into an equivalent upper-triangular form by systematically applying row operations. Once in an upper-triangular form, one can perform back-substitution to solve for .

Row Operations

Subtract a multiple of one row from another to eliminate entries below the main diagonal.

Aim to create zeros in column below row .

Partial Pivoting (optional)

When a pivot (diagonal) element is small (or zero), swap the current row with a row below that has a larger pivot element in the same column.

This step mitigates numerical instability by reducing the chance that small pivots lead to large rounding errors in subsequent operations.

Result

After eliminating all sub-diagonal entries, the matrix is in upper-triangular form .

Solve via back-substitution.

The matrix is now in echelon form (also called triangular form):

Below is a simple code for naive (no pivoting) Gaussian Elimination in python. Although normally we want to avoid for loops in python for performance, let’s stick with for loop this time so we can directly implement the algorithm we just described.

import numpy as np

def solve_Gaussian(A, b):

"""

Perform naive (no pivoting) Gaussian elimination to solve the

matrix equation A x = b.

Returns the solution vector x.

"""

assert A.ndim == 2 and A.shape[0] == A.shape[1] # must be square matrix

assert b.ndim == 1 and b.shape[0] == A.shape[1] # must be a vector

A = A.astype(float) # ensure floating-point, create copy by default

b = b.astype(float) # ensure floating-point, create copy by default

n = b.shape[0]

# Forward elimination

for k in range(n-1):

for i in range(k+1, n):

if A[k, k] == 0:

raise ValueError("Zero pivot encountered (no pivoting).")

factor = A[i, k] / A[k, k]

A[i, k:] -= factor * A[k, k:]

b[i] -= factor * b[k]

# Back-substitution

x = np.zeros(n)

for i in reversed(range(n)):

s = b[i]

for j in range(i+1, n):

s -= A[i, j] * x[j]

x[i] = s / A[i, i]

return xLet’s also compare with numpy’s solver.

A = np.random.random((3, 3))

b = np.random.random((3))

x_numpy = np.linalg.solve(A, b)

x_naive = solve_Gaussian(A, b)

print(x_numpy, "numpy")

print(x_naive, "naive")

print(abs(x_naive - x_numpy), "difference with niave")[ 1.31199067 0.86773422 -2.0787062 ] numpy

[ 1.31199067 0.86773422 -2.0787062 ] naive

[2.22044605e-16 5.55111512e-16 8.88178420e-16] difference with niave

Let’s set the (0,0) element to a small value.

A[0,0] = 1e-16

x_numpy = np.linalg.solve(A, b)

x_naive = solve_Gaussian(A, b)

print(x_numpy, "numpy")

print(x_naive, "naive")

print(abs(x_naive - x_numpy), "difference with niave")[-9.09087257 1.71955694 7.12358923] numpy

[-11.10223025 1.25642048 8. ] naive

[2.01135768 0.46313646 0.87641077] difference with niave

Let’s now improve the above naive (no pivoting) Gaussian elimination by adding pivoting.

# HANDSON: improve the above naive (no pivoting) Gaussian elimination

# by adding pivoting.

def solve_Gaussian_pivot(A, b):

"""

Perform Gaussian elimination with partial pivoting to solve

the matrix equation A x = b.

Returns the solution vector x.

"""

assert A.ndim == 2 and A.shape[0] == A.shape[1] # must be square matrix

assert b.ndim == 1 and b.shape[0] == A.shape[1] # must be a vector

A = A.astype(float) # ensure floating-point, create copy by default

b = b.astype(float) # ensure floating-point, create copy by default

n = b.shape[0]

# Forward elimination

for k in range(n-1):

# TODO: pivoting: find max pivot in column k

# TODO: swap rows if needed

for i in range(k+1, n):

### No longer a problem

# if A[k, k] == 0:

# raise ValueError("Zero pivot encountered (no pivoting).")

factor = A[i, k] / A[k, k]

A[i, k:] -= factor * A[k, k:]

b[i] -= factor * b[k]

# Back-substitution

x = np.zeros(n)

for i in reversed(range(n)):

s = b[i]

for j in range(i+1, n):

s -= A[i, j] * x[j]

x[i] = s / A[i, i]

return xx_naive = solve_Gaussian(A, b)

x_pivot = solve_Gaussian_pivot(A, b)

x_numpy = np.linalg.solve(A, b)

print(x_numpy, "numpy")

print(x_naive, "naive")

print(x_pivot, "pivot")

print(abs(x_naive - x_numpy), "difference with niave")

print(abs(x_pivot - x_numpy), "difference with pivot")[-9.09087257 1.71955694 7.12358923] numpy

[-11.10223025 1.25642048 8. ] naive

[-11.10223025 1.25642048 8. ] pivot

[2.01135768 0.46313646 0.87641077] difference with niave

[2.01135768 0.46313646 0.87641077] difference with pivot

# HANDSON:

#

# Change A and b to larger matrices.

# Again set A[0,0] to a small value.

#

# Test how solve_Gaussian() and solve_Gaussian_pivot() perform

# compared to numpy.linalg.sovle().

Decomposition¶

Decomposition is a systematic way to express as , where:

is a permutation matrix

is lower triangular (with 1s on the diagonal following the standard convention).

is upper triangular.

Once is factored as , solving becomes:

(forward substitution)

(back-substitution)

Gaussian elimination essentially constructs the and matrices behind the scenes:

The multipliers used in the row operations become the entries of .

The final upper-triangular form is .

import scipy.linalg as la

A = np.random.random((3, 3))

b = np.random.random((3))

# Perform LU decomposition with pivoting

P, L, U = la.lu(A) # P is the permutation matrix

print("Permutation matrix P:")

print(P)

print("Lower triangular matrix L:")

print(L)

print("Upper triangular matrix U:")

print(U, end="\n\n")

# Forward substitution for L y = Pt b

y = la.solve_triangular(L, np.dot(P.T, b), lower=True, unit_diagonal=True)

# Back substitution for U x = y

x_LU = la.solve_triangular(U, y)

print(x_LU, "LU decomposition")

print(np.linalg.solve(A, b), "numpy")Permutation matrix P:

[[0. 0. 1.]

[1. 0. 0.]

[0. 1. 0.]]

Lower triangular matrix L:

[[ 1. 0. 0. ]

[ 0.06003735 1. 0. ]

[ 0.83219309 -0.01887319 1. ]]

Upper triangular matrix U:

[[ 0.94762396 0.89939758 0.98510317]

[ 0. 0.79250809 0.40386857]

[ 0. 0. -0.48963738]]

[-0.7890113 0.99760099 0.22730616] LU decomposition

[-0.7890113 0.99760099 0.22730616] numpy

# HANDSON:

#

# Keep A at 3x3 but set A[0,0] to a small value.

# How does scipy.linalg.lu()'s solution compared to

# numpy.linalg.sovle()?

#

# Change A and b to larger matrices, and again set A[0,0] to a small

# value.

# How does scipy.linalg.lu()'s solution compared to

# numpy.linalg.sovle()?

Matrix Inverse¶

Although the matrix inverse is a central theoretical concept, explicitly forming just to solve is almost always unnecessary and can degrade numerical stability. Instead, direct approaches (like Gaussian Elimination or LU factorization) find with fewer operations and less error accumulation. Both methods cost about , but computing the entire inverse introduces extra steps and can magnify floating-point errors.

In rare cases, you might actually need .

For example, when:

Multiple Right-Hand Sides: If you must solve for many different , you might form or approximate for convenience.

Inverse-Related Operations: Some advanced algorithms (e.g., computing discrete Green’s functions or certain control theory design methods) explicitly require elements of the inverse.

In the demonstration below, we show how to compute the inverse using

np.linalg.inv().

However, again, it is generally safer and more efficient to solve

individual systems via a factorization method in practice.

# Simple 2x2 matrix

A = np.array([[2.0, 1.0],

[1.0, 2.0]], dtype=float)

# Compute inverse using NumPy

A_inv = np.linalg.inv(A)

print("Inverse of A:")

print(A_inv)

# Verify A_inv is indeed the inverse

I_check = A @ A_inv

print("Check A * A_inv == I?")

print(I_check)Inverse of A:

[[ 0.66666667 -0.33333333]

[-0.33333333 0.66666667]]

Check A * A_inv == I?

[[1. 0.]

[0. 1.]]

# HANDSON:

#

# Construct a larger matrix, maybe with `numpy.random.random()`,

# and then use `numpy.linalg.inv()` to compute its inverse.

# Check the inversion actually works.

# HANDSON:

#

# Compare the numerical solution of Ax = b by using

# `numpy.linalg.solve()` and by using `numpy.linalg.inv()` and then by

# matrix multiplication.

Iterative Solvers for Large/Sparse Systems¶

Direct methods are powerful but quickly become impractical when dealing with very large or sparse systems. In such cases, iterative solvers provide a scalable alternative.

Large-Scale Problems: PDE discretizations in 2D/3D can easily lead to systems with millions of unknowns, making direct approaches infeasible.

Sparse Matrices: Many physical systems produce matrices with a significant number of zero entries. Iterative methods may be implemented to access only nonzero elements each iteration, saving time and memory.

Scalability: On parallel architectures (clusters, GPUs, etc), iterative methods often scale better than direct factorizations.

Jacobi Iteration¶

Idea: Rewrite as , where is the diagonal of and is the remainder.

Update Rule:

Pros/Cons:

Easy to implement: each iteration updates using only values from the previous iteration.

Slow convergence unless is well-conditioned (e.g., diagonally dominant).

def solve_Jacobi(A, b, max_iter=1000, tol=1e-8):

"""

Solve A x = b using Jacobi iteration.

A is assumed to be square with non-zero diagonal.

"""

assert A.ndim == 2 and A.shape[0] == A.shape[1] # must be square matrix

assert b.ndim == 1 and b.shape[0] == A.shape[1] # must be a vector

A = A.astype(float) # ensure floating-point, create copy by default

b = b.astype(float) # ensure floating-point, create copy by default

n = A.shape[0]

# Jacobi iteration

x = np.zeros(n)

for k in range(max_iter):

x_old = np.copy(x)

for i in range(n):

# Sum over off-diagonal terms

s = 0.0

for j in range(n):

if j != i:

s += A[i,j] * x_old[j]

x[i] = (b[i] - s) / A[i, i]

# Check for convergence

if np.linalg.norm(x - x_old, ord=np.inf) < tol:

print(f"Jacobi converged in {k+1} iterations.")

return x

print("Jacobi did not fully converge within max_iter.")

return x# Example system (small, but let's pretend it's "sparse")

A = np.array([[4.0, -1.0, 0.0],

[-1.0, 4.0, -1.0],

[0.0, -1.0, 4.0]], dtype=float)

b = np.array([6.0, 6.0, 6.0], dtype=float)

x_jacobi = solve_Jacobi(A, b)

x_numpy = np.linalg.solve(A, b)

print(x_jacobi, "Jacobi Interaction")

print(x_numpy, "direction (numpy)")

print(abs(x_jacobi - x_numpy), "difference")Jacobi converged in 20 iterations.

[2.14285714 2.57142857 2.14285714] Jacobi Interaction

[2.14285714 2.57142857 2.14285714] direction (numpy)

[1.99569117e-09 2.39482922e-09 1.99569117e-09] difference

# HANDSON:

#

# Change the tolerance by setting the keyword `tol` to different

# values.

# How does the numerical solution look like?

# How many more steps do it take to "converge" to the required

# tolerance level?

Gauss-Seidel Iteration¶

Gauss-Seidel iteration is closely related to Jacobi but usually converges faster, particularly if the matrix is strictly or diagonally dominant.

Idea:

Instead of using only the old iteration values (from step ), Gauss-Seidel uses the most recent updates within the same iteration.

This often converges faster because you incorporate newly computed values immediately rather than waiting for the next iteration.

Update Rule:

Note that is already partially updated (for ).

Convergence Properties:

Gauss-Seidel is shown to converge for strictly diagonally dominant matrices, and often outperforms Jacobi in practice.

Still relatively slow for large, ill-conditioned systems.

# HANDSON: update the Jacobi iteration code to Gauss-Seidel

def solve_GS(A, b, max_iter=1000, tol=1e-8):

"""

Solve A x = b using Gauss-Seidel iteration.

A is assumed to be square with non-zero diagonal.

"""

assert A.ndim == 2 and A.shape[0] == A.shape[1] # must be square matrix

assert b.ndim == 1 and b.shape[0] == A.shape[1] # must be a vector

A = A.astype(float) # ensure floating-point, create copy by default

b = b.astype(float) # ensure floating-point, create copy by default

n = A.shape[0]

# TODO: Gauss-Seidel iteration: what should be changed comared to Jacobi?

x = np.zeros(n)

for k in range(max_iter):

x_old = np.copy(x)

for i in range(n):

# Sum over off-diagonal terms

s = 0.0

for j in range(n):

if j != i:

s += A[i,j] * x_old[j]

x[i] = (b[i] - s) / A[i, i]

# Check for convergence

if np.linalg.norm(x - x_old, ord=np.inf) < tol:

print(f"Jacobi converged in {k+1} iterations.")

return x

print("Jacobi did not fully converge within max_iter.")

return x# HANDSON:

#

# Check that `solve_GS()` works as expected.

# HANDSON:

#

# Change the tolerance by setting the keyword `tol` to different

# values.

# How does the numerical solution look like?

# How many more steps do it take to "converge" to the required

# tolerance level?

# How does this compared to Jacobi?

Eigenvalue Problems¶

Eigenvalue problems show up in many physics applications, including normal mode analysis, quantum mechanics, and stability analyses.

Normal Modes

Vibrational analyses of structures or molecules reduce to . The solutions are eigenpairs .

Each eigenvector describes a mode shape; the eigenvalue is the squared frequency.

Quantum Mechanics

The Schrodinger equation in matrix form yields eigenvalues (energy levels) and eigenstates .

Collecting all eigenvectors (as columns) in a matrix diagonalizes if it is Hermitian.

Stability Analysis

A linearized system near equilibrium produces a Jacobian . Eigenvalues of show growth/decay rates of perturbations.

If real parts of eigenvalues are negative, the system is stable; if positive, it’s unstable.

Given an matrix , the eigenvalue problem seeks scalars (eigenvalues) and nonzero vectors (eigenvectors) such that:

Eigenvalues can be real or complex.

Eigenvectors identify the directions in which acts as a simple scale transformation ().

Power Method for the Dominant Eigenpair¶

The power method is a straightforward iterative technique for finding the eigenpair associated with the largest-magnitude eigenvalue:

Algorithm:

Begin with an initial vector .

Apply repeatedly and normalize:

This converges to the eigenvector of the eigenvalue with the largest magnitude, assuming it’s distinct.

Limitations

Only computes one eigenvalue/eigenvector.

Convergence can be slow if other eigenvalues have magnitude close to the dominant one.

def eig_power(A, max_iter=1000, tol=1e-7):

"""

Returns the eigenvalue of largest magnitude and its eigenvector.

"""

assert A.ndim == 2 and A.shape[0] == A.shape[1] # must be square matrix

A = A.astype(float) # ensure floating-point, create copy by default

n = A.shape[0]

w = np.ones(n)

lam = np.linalg.norm(w)

v = w / lam

lam_old = lam

for i in range(max_iter):

w = A @ v # matrix multiplication

lam = np.linalg.norm(w)

v = w / lam

if abs(lam - lam_old) < tol:

print(f"Power method converged in {i+1} iterations.")

return lam, v

lam_old = lam

return lam, v# Test the Power Method

A = np.array([[2, 1],

[1, 3]], dtype=float)

lam_dom, v_dom = eig_power(A)

print(lam_dom, v_dom, "power")

# Compare with NumPy

lams, vs = np.linalg.eig(A)

idx = np.argmax(np.abs(lams))

print(lams[idx], vs[:, idx], "numpy")

print('Testing:', A @ v_dom - lam_dom * v_dom)Power method converged in 10 iterations.

3.618033986170775 [0.52574439 0.8506426 ] power

3.618033988749895 [-0.52573111 -0.85065081] numpy

Testing: [-2.96825191e-05 1.83478376e-05]

# HANDSON

#

# Try the power method with larger matrices.

# How fast/slow it is for convergence?

# How does the result compared to `numpy.linalg.eig()`?

QR Algorithm for Dense Matrices¶

For dense matrices of moderate size, the algorithm (or related methods) is the workhorse for computing all eigenvalues and optionally eigenvectors.

Idea:

Repeatedly factor as , where is orthonormal and is upper triangular.

Set .

After enough iterations, becomes near-upper-triangular, revealing the eigenvalues on its diagonal.

Practical Approach

In Python, use

numpy.linalg.eigornumpy.linalg.eigh(for Hermitian matrices).These functions wrap optimized LAPACK routines implementing or related transformations, e.g., divide-and-conquer, multiple relatively robust representations (MRRR).

A = np.array([[4, 1, 2],

[1, 3, 1],

[2, 1, 5]], dtype=float)

lams, vs = np.linalg.eig(A)

print('Eigenvalues:', lams)

print('Eigenvectors:')

print(vs)

print('Testing:')

for i in range(vs.shape[1]):

print(A @ vs[:,i] - lams[i] * vs[:,i])Eigenvalues: [7.04891734 2.30797853 2.64310413]

Eigenvectors:

[[ 0.59100905 0.73697623 -0.32798528]

[ 0.32798528 -0.59100905 -0.73697623]

[ 0.73697623 -0.32798528 0.59100905]]

Testing:

[0.0000000e+00 8.8817842e-16 0.0000000e+00]

[ 2.22044605e-15 -4.44089210e-16 -6.66133815e-16]

[-1.11022302e-16 -2.22044605e-16 4.44089210e-16]

Application: Coupled Harmonic Oscillators¶

Harmonic oscillators are a classic problem in physics, in this hands-on, we will:

Derive or reference the analytical solution for two coupled oscillators.

Numerically solve the same system (using an eigenvalue approach).

Generalize to (and even ) coupled oscillators, visualizing the mode shapes.

Two Coupled Oscillators--Analytical Solution¶

Consider two masses connected by three springs (constant ), arranged in a line and connected to two walls:

|--k--[m]--k--[m]--k--|If each mass can move only horizontally, the equations of motion form a eigenvalue problem. Let:

be the horizontal displacement of Mass 1 from its equilibrium position.

be the horizontal displacement of Mass 2. We assume small oscillations, so Hooke’s law applies linearly.

Mass 1 experiences:

A restoring force from the left wall spring.

A coupling force from the middle spring: if , that spring pulls Mass 1 to the right; if , it pulls Mass 1 to the left. The net contribution is . Summing forces (Newton’s second law) gives:

Mass 2 experiences:

A restoring force from the right wall spring.

The coupling force from the middle spring: . Hence,

Rewrite each equation:

We can write and express the system as:

where

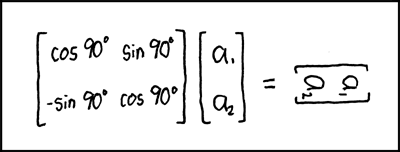

Equivalently,

This is a second-order linear system describing small oscillations.

We look for solutions of the form

where is the initial condition and is the (angular) oscillation frequency.

Explicitly, is:

The determinant is . Setting this to zero results

For each of the normal modes:

Lower Frequency : Plug back into . For instance,

This implies . Physically, the in-phase mode has both masses moving together.

Higher Frequency :

This yields . Physically, the out-of-phase mode has the two masses moving in opposite directions.

We can compute the position of these coupled oscillators according to the analytical solutions.

# Physical constants

m = 1.0 # mass

k = 1.0 # spring constant

# Frequencies for two normal modes

omegap = np.sqrt(k/m) # in-phase

omegam = np.sqrt(3*k/m) # out-of-phase

# Initial conditions

x1_0 = 0

x2_0 = 0.5

# The analytical solution:

def X_analytic(t):

xp_0 = (x1_0 + x2_0) / 2

xm_0 = (x1_0 - x2_0) / 2

xp = xp_0 * np.cos(omegap * t)

xm = xm_0 * np.cos(omegam * t)

return xp + xm, xp - xmPlot multiple frames:

from matplotlib import pyplot as plt

from matplotlib import animation

def mkanim(X, T):

fig = plt.figure(figsize=(8,8))

ax = plt.axes(xlim=(0, 3), ylim=(-1.5, 1.5))

ax.set_aspect('equal')

line, = ax.plot([], [], 'o-', lw=2)

def init():

line.set_data([], [])

return line,

def animate(i):

x1, x2 = X(T[i])

line.set_data([0, 1+x1, 2+x2, 3], [0, 0, 0, 0], )

return line,

anim = animation.FuncAnimation(fig, animate, init_func=init, frames=len(T), interval=10, blit=True)

plt.close()

return animfrom IPython.display import HTML

HTML(mkanim(X_analytic, np.linspace(0, 10, 250)).to_html5_video())Two Coupled Oscillators--Semi-Analytical/Numerical Solution¶

Instead of solving the coupled oscillator problem analytically, we can at least solve the eigenvalue part of the problem numerically.

# HANDSON: Step 1. rewrite the analytical solution in matrix form

# Physical constants

m = 1.0 # mass

k = 1.0 # spring constant

# Frequencies for two normal modes

Omega = np.array([...]) # this should become a numpy array

# Initial conditions

X0 = np.array([...]) # this should become a numpy array

M0 = ... # apply an transformation to rewrite the transformation in terms of eigenvectors

# The analytical solution in matrix notation:

def X_matrix(t):

M = M0 * np.cos(Omega * t)

return ... # apply an inverse transformation to rewrite the modes in terms of x1 and x2# Test ...

print(X_analytic(1))

print(X_matrix(1))# HANDSON: Step 2. Replace the manual solutions of eigenvalues Omega

# and the transform by calling `numpy.linalg.eig()`

# Coupling matrix

K = np.array([

[...],

[...],

])

# Initial conditions

X0 = np.array([...])

# The semi-analytical solution in matrix notation:

Omega = ...

M0 = ...

def X_matrix(t):

return ...# Test ...

print(X_analytic(1))

print(X_matrix(1))# HANDSON: Step 3. Generalize the solution to work for arbitrary

# number of coupled oscillators

# Coupling matrix

K = np.array([

[...],

[...],

[...],

])

# Initial conditions

X0 = np.array([...])

# The semi-analytical solution in matrix notation:

Omega = ...

M0 = ...

def X_matrix(t):

return ...# HANDSON: Step 4. Turn the outputs into a movie

HTML(mkanim(np.linspace(0, 10, 250)).to_html5_video())Future Topics¶

Singular Value Decomposition (SVD)¶

SVD factorizes . It reveals how a matrix stretches vectors in orthogonal directions. It works for non-square matrices, provides optimal low-rank approximations, is numerically stable, and is widely used for pseudoinverses, compression, and noise reduction.

Principal Component Analysis (PCA)¶

PCA applies SVD to centered data to find directions of maximum variance. It reduces dimensionality, highlights dominant modes, and simplifies noisy datasets, making it essential in physics, data modeling, and machine learning.

SVD and PCA are cores of dimensionality reduction. We may revisit them when we connect linear algebra to machine learning.